Submitting with a VO Frontend

These examples assumes you have GlideinWMS installation running and as a user, you have access to submit jobs. Make sure you have sourced the correct HTCondor installation.NOTE: It is recommended that you always provide a VOMS proxy in the user job submission. This will allow you to run on a site whether or not gLExec is enabled. A proxy may also be required for other reasons, such as the job staging data.

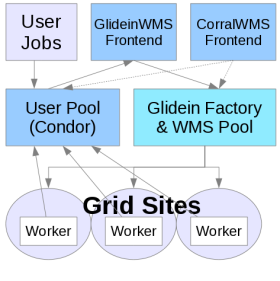

The GlideinWMS environment looks almost exactly like a regular, local HTCondor pool. It just does not have any resources attached unless you ask for them; try

$ condor_statusand you can see that no glideins are connected to your local pool. The GlideinWMS system will submit glideins on your behalf when they are needed. Some information may need to be specified in order for you get glideins that can run your jobs. Depending on your VO Frontend configuration, you may also have to specify additional requirements.

Submitting a simple job with no requirements

Here is a generic job that calculates Pi using the monte carlo method. First create a file called pi.py and make it executable:

#!/bin/env python

from random import *

from math import sqrt,pi

from sys import argv

inside=0

n=int(argv[1])

for i in range(0,n):

x=random()

y=random()

if sqrt(x*x+y*y)<=1:

inside+=1

pi_prime=4.0*inside/n

print pi_prime, pi-pi_prime

You can run it:

$ ./pi.py 1000000

3.1428 -0.00120734641021

The first number is the approximation of pi. The second number is how far from the real pi it is. If you repeat this, you will

see how the result changes every time.

Now, let's submit this as a HTCondor job. Because we are going to run this multiple times (100), it will actually be a bunch of jobs. These jobs should run everywhere, so we won't need to specify any additional requirements. Create the submit file and call it myjob.sh:

Universe = vanilla

Executable = pi.py

Arguments = 10000000

Requirements = (Arch=!="")

Log = job.$(Cluster).log

Output = job.$(Cluster).$(Process).out

Error = job.$(Cluster).$(Process).err

should_transfer_files = YES

when_to_transfer_output = ON_EXIT

Queue 100

Next submit the job:

$ condor_submit myjob.shThe VO Frontend is monitoring the job queue and user collector. When it sees your jobs and that there are no glideins, it will ask the Factory to provide some. Once the glideins start and contact your user collector, you can see them by running

$ condor_statusHTCondor will match your jobs to the glideins and the jobs will then run. You can monitor the status of your user jobs by running

$ condor_qOnce the jobs have finished, you can view the output in the job.$(Cluster).$(Process).out files.

Understanding where jobs are running

While your jobs can run everywhere, you may still want to know where they actually ran; possibly because you want to know who to thank for the CPUs you were consuming, or to debug problems you had with your program.To do this, we add some additional attributes to the submit file:

Universe = vanilla

Executable = pi.py

Arguments = 50000000

Requirements = (Arch=!="")

Log = job.$(Cluster).log

Output = job.$(Cluster).$(Process).out

Error = job.$(Cluster).$(Process).err

should_transfer_files = YES

when_to_transfer_output = ON_EXIT

+JOB_Site = "$$(GLIDEIN_Site:Unknown)"

+JOB_Gatekeeper = "$$(GLIDEIN_Gatekeeper:Unknown)"

Queue 100

These additional attributes in the job are used by the VO Frontend to find sites that match these requirements. HTCondor also uses them to

match your jobs to the right glideins.

Now submit the job cluster as before. You can monitor the running jobs with:

$ condor_q `id -un` -const 'JobStatus==2' -format '%d.' ClusterId -format '%d ' ProcId -format '%s\n' MATCH_EXP_JOB_Site

Additional resources

If you need help debugging issues with running jobs, see our Frontend troubleshooting guide.